Another step on the way to an AI future we can’t quite imagine

As much as I enjoy dabbling an experimenting with the new, shiny tools and techniques we get on an almost daily basis from AI companies, I keep going back to “OK…so what’s next, where does this take us?”. There’s likely a huge gap between harmless memes and the armageddon of Terminator 2.

Some of this is pushing at things we don’t quite have the language for or when we push, can barely visualise. There aretwo related things I’ve been finding fascinating in the background: dynamic software and not being agent driven.

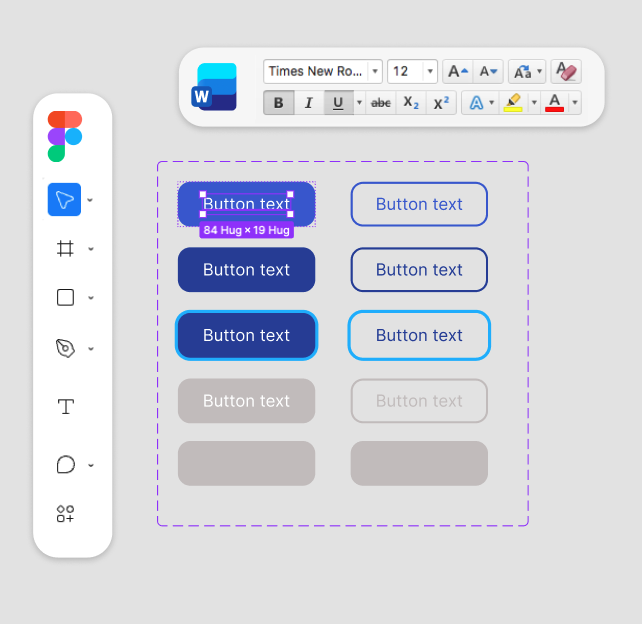

Currently the design tool of choice for most people is Figma, so let’s use this as an example of what I mean. Today, this comprises a complex, robust product shipped to millions of users with updates carefully managed and all that goes with it. But what might an alternative look like?

What if we also put aside the idea of the chatbot too, and the assumption that the only way we can invoke anything is through conversation? There’s something here about distilling it down to a signal from the user, in a particular context that requires a reaction, which could be a prompt to initiate a conversation as much as tapping something in an interface. In my head, we have a ‘gateway’ that’s agentic under the hood but not always about prose or threads of chat, through that can be an aspect of it. A gateway can create a ‘workspace’, kind of a base for the dynamic software. These constructs could help us understand how to deal with a total blank canvas. Your need then determines the form the software takes. Using something as complex as Photoshop for an example, perhaps you need to do a specific task and so your software only provides you with the specific controls you need for that task.

In Figma’s case, it does many things over many discrete products, but perhaps in the workspace, you have fragmented controls that are assembled to contextually help you to solve your problem. There’s a mix of context, simulated intuition as well as explicit intent in the mix but what we have gets us to a solution quickly. While the generated outcome could have dubious quality dependent on training data, this way we’re a part of the process and collaborating not with a single agent. Moving away from the 1:1 working relationship, and towards more of an entire ecosystem. You can talk with elements of the ecosystem if you wanted or needed to but a flavour of UI or input/output can be derived from how you like to work at this moment. The UI could adapt more to the context of your current task with the right cues.

So what is software? Is doesn’t mean we’re creating fragments of UI, though that might be part of it, we’re creating structure and storytelling and context and rationale and knowledge and guidelines and so on… A control in a chunk of UI doesn’t exist in a vacuum, it forms an agreement or contract with the other part of the workspace to provide a specific kind of value for what you need to do. Maybe software as we know it becomes something more ephemeral, but not without value.

These ideas mean that agents don’t have to be constrained to chat interfaces. While that might be what we’re currently most familiar with, I think there’s something more we’re missing: what could agents do if we weren’t embroiled in conversation? If you explore what they are and could be capable of, isn’t chat just to please us?

In many ways, LLMs not having knowledge or memory isn’t that big a deal, we architect the scaffolding and structures to break the existing conventions we have and see what else might be possible. Maybe we don’t need AGI, we just need to rethink our roles in this picture?

So the likes of Figma becomes something you can pull in and use anywhere and shows only the part you need. We can conjure up the kind of thing we want to create and the ‘software’ available to us becomes a dynamic pool to draw from. I’m sure we’d still have subscriptions and all of that…but how they manifest would be free of the weight and waste of having a centralised product. A2UI and MCP Apps are a starting point but they hint at something bigger and just a step towards whatever that might be.

As consumers, is this easy and convenient? If any of this were where we ended up, I think it does rightly challenge a lot of the UX best practices to reassess in a new model what still holds true and adds value. If there’s a dynamic workspace with many parts of traditional software present in contextual ways then does it stop being predictable? How are new features bedded in? How do we learn from users when there are so many permutations of what using our products might mean?

So after I go off on one and let my imagination go, I start to wonder “OK…so what’s next, where does this take us?”, what might be after any of this? I have no idea and I think that’s what I love. There are legitimate fears and impacts that I struggle with around anything AI based that don’t go away but it fires up my curiosity and imagination in a way that twelve year old me would be so happy about. Under the excitement of experimentation with the new tool or technique of the week, it’s worth asking where these small changes might lead. How might we challenge or reimage some current expectations in a way that while unfamiliar to us now might actually be a good thing?